Orbital Data Centers: Technical Validation and Strategic Positioning in the 2025-2030 Transition Period

Executive Summary

In November 2025, Starcloud deployed the first NVIDIA H100 GPU to orbit aboard a 60 kg satellite, successfully executing AI model training including Google's Gemma and NanoGPT in space—validating that data-center-class processors can operate reliably under radiation, thermal, and vacuum conditions previously considered prohibitive. This breakthrough advances orbital computing from conceptual research to hardware demonstrations at Technology Readiness Level 6-7. This white paper examines five years of technical maturation (2020-2025) across power generation achieving 95-99% solar capacity factors, passive thermal management dissipating 100-350 W/m² through radiative cooling, radiation mitigation protecting high-performance GPUs via hybrid COTS/rad-hard approaches, and optical inter-satellite links delivering 2.5-100 Gbps connectivity for edge processing architectures. As launch costs approach projected sub-$200/kg targets by the mid-2030s and commercial platforms including Axiom Space's Orbital Data Center nodes transition from ISS demonstrations to free-flying constellations, the 2025-2030 period creates strategic positioning windows for satellite edge computing, AI inference infrastructure, and Earth-independent cloud services within the $480 billion commercial space economy.

Technical analysis examining five years of orbital computing maturation (2020-2025) across Starcloud's H100 GPU satellite, Axiom Space Orbital Data Center nodes, and China's Three-Body Constellation. Documents validated performance across power generation (95-99% capacity factors), passive thermal management (100-350 W/m²), radiation mitigation for COTS processors, and optical inter-satellite links (2.5-100 Gbps). 58-page PDF providing technical specifications, architecture comparisons, and market segmentation frameworks for aerospace engineers and infrastructure investors evaluating the $1.78B-to-$39B orbital computing sector through 2035.

Research Context: Terrestrial Constraints and Orbital Alternatives

Terrestrial data centers confront fundamental sustainability constraints: AI workloads projected to consume 9% of U.S. electricity by 2030, evaporative cooling systems requiring billions of gallons of water annually, and compute density limitations where atmospheric thermal dissipation caps rack-level power at escalating infrastructure costs. This white paper examines orbital alternatives demonstrated by Starcloud's H100 GPU satellite (November 2025), Axiom Space's Orbital Data Center nodes achieving 2.5 Gbps optical connectivity (2025), China's Three-Body Constellation delivering 5 petaflops across 12 satellites with 100 Gbps inter-satellite links (May 2025), and HPE's Spaceborne Computer-2 validating COTS server operation on the ISS since February 2021.

Analysis encompasses power systems leveraging unattenuated 1,367 W/m² solar irradiance, thermal architectures governed by Stefan-Boltzmann physics imposing 5-10 kg/m² radiator mass penalties, radiation hardening strategies balancing fully rad-hard processors against shielded commercial components, and latency characteristics of 45-80 milliseconds for LEO platforms. The orbital data center sector projects growth from current demonstration-phase operations to $1.78 billion by 2029 and $39 billion by 2035, contingent upon launch cost reductions and thermal management breakthroughs.

As SpaceX Starship targets 25+ launches in 2025 toward projected sub-$200/kg economics and the ISS approaches 2030 retirement, commercial platforms position to inherit validated orbital computing capacity during the 2026-2030 transition window.

Validated Technical Capabilities Across Four Critical Subsystems

Solar Power Generation and Continuous Energy Availability

Sun-synchronous orbits eliminate atmospheric attenuation and diurnal cycles, achieving 95-99% capacity factors compared to 15-25% terrestrial averages. Deployable photovoltaic arrays demonstrate 30-200 W/kg specific power through III-V multi-junction cells, with NEC Corporation's thin membrane designs reaching 150 W/kg in ground testing. The fundamental advantage derives from unattenuated 1,367 W/m² solar irradiance continuously available in space, compared to terrestrial installations experiencing nighttime interruptions, cloud cover, and atmospheric losses reducing peak values by 35-40%.

NASA's Print-Assisted Photovoltaic Assembly (PAPA) technology demonstrates autonomous robotic assembly approaches, reducing costs from $250-350/W to $25-35/W while enabling arrays exceeding 500 kW without manual touch labor. These advances suggest potential pathways toward gigawatt-scale architectures, though current demonstrations remain at kilowatt-class scales requiring validation of robot-assembled structures beyond single-launch deployments. Starcloud's partnership with Rendezvous to develop robot swarms for autonomous photovoltaic construction targets 4 km × 4 km arrays, with initial demonstrations planned for late 2025 validation of modular docking and integration capabilities.

Passive Thermal Management: Physics-Imposed Constraints

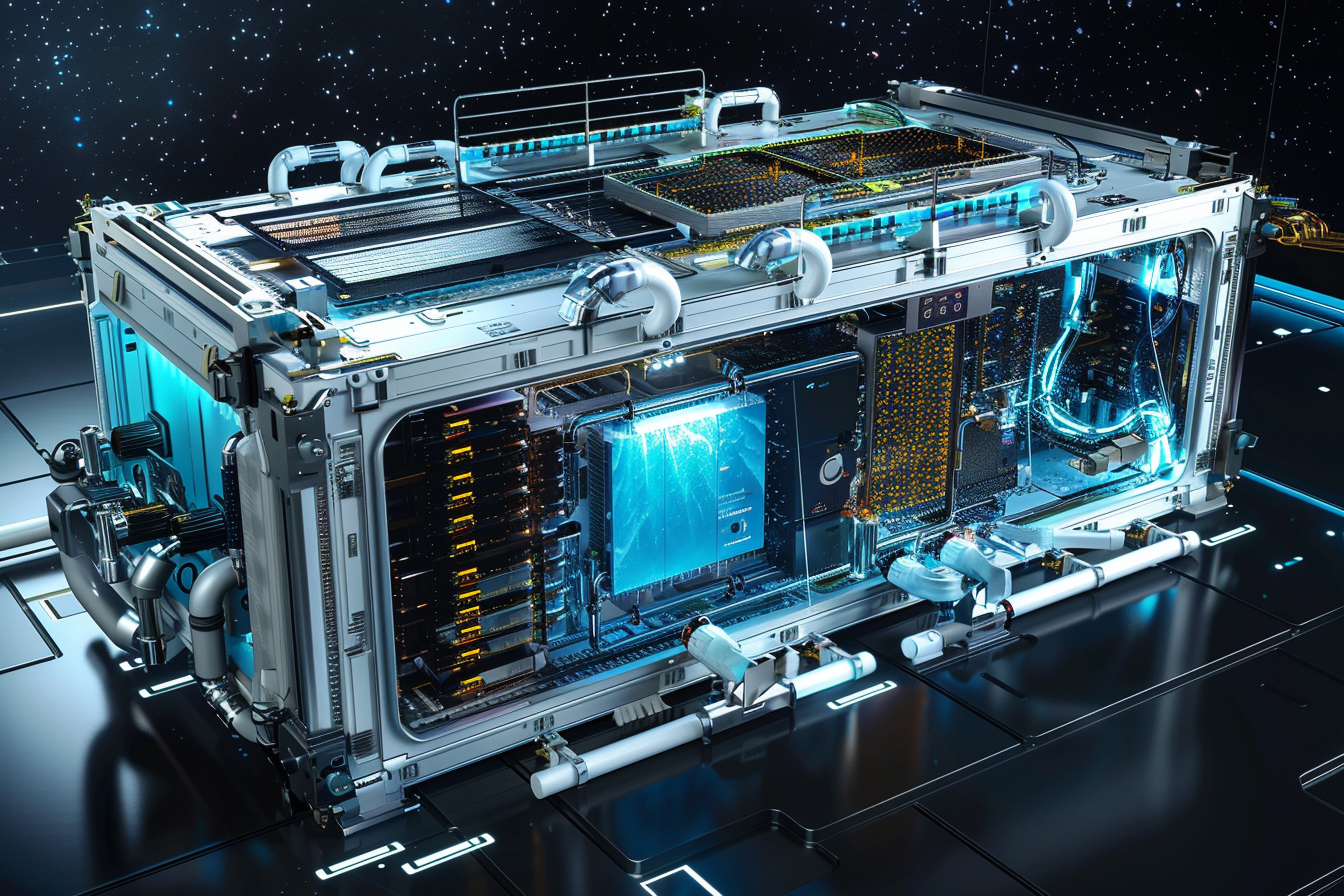

Radiative heat rejection governed by Stefan-Boltzmann law achieves 100-350 W/m² dissipation rates but imposes 5-10 kg/m² mass penalties for radiator systems that frequently equal or exceed computing hardware mass for megawatt-class installations. Starcloud-1's successful operation of a 700W H100 GPU via immersion cooling and passive radiators validates kilowatt-scale feasibility, demonstrating that dielectric fluid-based thermal acquisition coupled with deployable radiator panels can maintain processor junction temperatures within operational limits during continuous high-utilization workloads.

However, extrapolation to rack-level deployments requiring 150-2,000 m² radiator areas for 100 kW to 1 MW compute loads reveals fundamental constraints on achievable density per launch. The fourth-power temperature relationship in Stefan-Boltzmann physics means modest radiator temperature increases yield substantial heat rejection improvements, creating design incentives for thermal transport systems maintaining elevated radiator temperatures while keeping compute components within safe limits. Heat pipes and loop heat pipes—demonstrated across NASA missions including GOES satellites and ISS thermal control—enable passive thermal transport from immersion cooling reservoirs to radiator surfaces, with variable conductance designs preventing overcooling during eclipse phases in LEO's 90-minute orbital period.

These mass penalties drive architectural preferences toward distributed constellation approaches rather than monolithic platforms, with multiple smaller satellites avoiding the quadratic scaling of radiator area relative to compute capacity concentration.

Radiation Mitigation: Hybrid Approaches Enabling COTS Performance

Hybrid approaches combining moderate hardware hardening with software-based fault tolerance enable COTS processors to achieve 100× performance improvements over fully radiation-hardened alternatives. HPE's Spaceborne Computer-2, operating on the ISS since February 2021, demonstrates software mitigation through dynamic CPU throttling during South Atlantic Anomaly passages, achieving 20,000× processing speedups for genomic sequencing compared to traditional ground-relay workflows while maintaining zero mission failures across multi-year operations.

Starcloud-1's November 2025 demonstration executing Google Gemma and NanoGPT training validates that NVIDIA H100 GPUs—delivering 80GB HBM3 memory and 3,958 TOPS INT8 throughput—can function reliably in LEO radiation environments without custom radiation-hardened redesign. Proprietary shielding approaches leverage hydrogen-rich materials providing superior radiation attenuation per unit mass compared to aluminum, while immersion cooling fluids provide additional radiation shielding from organic compound hydrogen content attenuating secondary particles from cosmic ray interactions.

Microchip Technology's radiation-hardened processors, including SAMRH707 and SAMRH71 using 32-bit ARM Cortex-M7 cores hardened to 150 krad via silicon-on-insulator processes, demonstrate conservative alternatives achieving TRL 9 mission-proven reliability but delivering 50 MHz operation compared to multi-GHz terrestrial processors. The strategic choice between cutting-edge COTS components with proprietary mitigation at TRL 6-7 versus established rad-hard processors at TRL 9 but one-to-two generation performance lag shapes fundamental system architecture and competitive positioning.

Long-term reliability under cumulative Total Ionizing Dose approaching 100+ Krads over projected 7-10 year mission lifespans requires extended flight validation beyond current demonstrations, with radiation-induced degradation mechanisms including threshold voltage shifts, leakage current increases, and displacement damage accumulation requiring continuous monitoring and predictive fault management.

Optical Inter-Satellite Links and Edge Processing Latency

SpaceX's 9,000+ laser-equipped Starlink satellites achieve 100 Gbps per link while transferring 42 petabytes daily, executing over 250,000 link acquisitions daily across dynamic mesh topologies that maintain 99.99% uptime through continuous reconfigurations adapting to orbital mechanics. Axiom Space's Orbital Data Center nodes demonstrate 2.5 Gbps optical connectivity compatible with Space Development Agency Tranche 1 standards, with planned upgrades to 10+ Gbps and eventual terabit-scale capacity as dense wavelength-division multiplexing technology matures.

These optical inter-satellite link deployments eliminate ground-relay latency for satellite-to-satellite communications, enabling direct mesh routing compared to traditional bent-pipe architectures requiring satellite → ground station → terrestrial network → ground station → satellite paths. Muon Space's integration of Starlink mini-laser terminals (25 Gbps capacity over 4,000 km distances) achieved orbit-to-ground latency in milliseconds range compared to 20-minute delays for conventional intermittent downlink windows.

Edge processing architectures demonstrate transformative latency advantages for applications where data originates in orbit. NASA's CogniSAT-6 completes autonomous cloud-free imaging workflows—acquiring look-ahead imagery, processing with onboard AI to identify optimal targets, determining instrument pointing, and capturing planned observations—in under 90 seconds without ground station involvement, compared to traditional multi-hour tasking cycles requiring terrestrial processing and uplink command generation. ESA's Φsat-2 satellite performs deep image compression, cloud detection, street and vessel identification, fire detection, and marine anomaly analysis entirely in orbit using Intel Movidius Myriad 2 vision processors within 6U CubeSat power budgets.

For ground-user access, current LEO constellations achieve median end-to-end latencies of 45-80 milliseconds, positioning orbital platforms competitively against 600+ millisecond GEO alternatives but trailing sub-50 millisecond terrestrial fiber CDNs in developed regions. This latency profile proves suitable for AI inference with caching, educational applications, and many cloud workloads, while falling short of sub-10 millisecond requirements for high-frequency trading or ultra-responsive gaming. Bandwidth constraints from 300-500 Tbps orbital aggregate capacity (Starlink alone) remain orders of magnitude below multi-petabit terrestrial data center fabrics, creating congestion for distributed AI training requiring continuous gradient synchronization but supporting inference workloads transmitting compressed results rather than complete datasets.

Technology Readiness and Demonstration Maturity

Current demonstrations span TRL 6-7 for kilowatt-scale prototypes, establishing technical feasibility while revealing scaling challenges toward operational systems. Starcloud's H100 GPU satellite advances orbital computing to TRL 7 through successful operation in actual LEO conditions, validating radiation shielding, thermal management, and power systems over projected five-year mission duration. Axiom's ODC nodes similarly achieve TRL 6-7 with initial 2025 launches as free-flying satellites, demonstrating autonomous thermal management, 2.5 Gbps optical inter-satellite connectivity, and radiation-hardened COTS processor integration.

China's Three-Body Constellation initial cluster—12 satellites launched May 2025, each delivering 744 TOPS with 30 TB onboard storage and 100 Gbps laser communication links—demonstrates distributed supercomputing architecture at 5 petaflops aggregate capacity. The planned 2,800-satellite expansion targeting 1,000 petaflops represents first operational-scale space-based supercomputing network, though full deployment timeline extends through the late 2020s.

Google's Project Suncatcher, announced November 2025 with prototype satellites targeting early 2027 launches in partnership with Planet Labs, remains at TRL 4-5. The initiative envisions constellation clusters of 81 satellites in 1 km radius formations at approximately 650 km altitude in dawn-dusk sun-synchronous orbits, equipped with TPUs and free-space optical links supporting tens of terabits per second via multi-channel dense wavelength-division multiplexing. Economic viability depends critically on SpaceX Starship achieving projected launch costs below $200/kg by mid-2030s—approximately seven to eight times cheaper than current Falcon 9 costs of $1,500-$2,500/kg—which would enable construction costs comparable to terrestrial facilities over 10-20 year operational horizons.

Gigawatt-scale operational systems projected for 2031-2036 require closing critical gaps spanning TRL 3-5 for enabling technologies. Autonomous servicing capabilities—essential for 7-10 year operational lifespans enabling component replacement and hardware upgrades without expensive crewed missions—remain at early development stages, with NASA's OSAM programs targeting 2030s demonstration. Scalable thermal management for multi-megawatt loads faces fundamental validation gaps: no deployable radiator exceeding 100 m² has been demonstrated on-orbit, leaving multi-megawatt designs reliant on unproven extrapolations from current kilowatt-class systems. Terabit-scale optical networking across 100-1,000 satellite constellations requires pointing accuracy below 1 microradian and 99.9% link availability despite orbital drift, vibration, and thermal distortions—performance levels demonstrated in laboratory but requiring extensive flight validation.

Market Segmentation and Application Viability

Analysis reveals differentiated viability across application domains defined by latency tolerance, bandwidth requirements, and data source location. Satellite edge processing eliminating ground-relay latency for orbital data sources demonstrates immediate commercial feasibility at current technology maturity. Earth observation analytics, satellite communications processing, and space exploration telemetry benefit maximally from onboard computation, reducing hours-scale traditional workflows to seconds or minutes while decreasing downlink bandwidth consumption by up to 80% through intelligent filtering transmitting refined insights rather than complete raw datasets.

AI inference workloads tolerating 45-80 millisecond latencies position LEO platforms competitively for underserved geographies lacking terrestrial fiber infrastructure, enabling educational applications, telemedicine, and remote work previously impractical with 600+ millisecond GEO latencies. NVIDIA H100 GPUs demonstrated in orbit achieve 5× better inference latency than prior-generation A100 processors for large-batch workloads, supporting large language model execution including Google's Gemma and transformer architectures entirely in space.

General-purpose cloud computing and distributed AI training face bandwidth constraints from limited orbital aggregate capacity orders of magnitude below terrestrial data center requirements. Training large language models in distributed fashion across orbital nodes necessitates transferring gigabytes of gradient updates per training step, with network latency directly extending job completion time as GPUs idle waiting for synchronization. While AI training can tolerate up to 100 milliseconds inter-region delay without major performance degradation, the 300-500 Tbps orbital bandwidth creates congestion, packet loss, and retransmissions compounding tail latency beyond acceptable thresholds.

This drives architectural consensus toward hybrid models: terrestrial training in hyperscale data centers with abundant interconnect bandwidth, orbital inference at edge nodes proximate to satellite data sources. The complementary strengths—terrestrial facilities providing communication-intensive distributed training infrastructure, orbital platforms offering continuous solar power and proximity to space-generated data for inference—align with broader edge computing trends distributing processing to minimize data movement.

The orbital data center market projects growth from current demonstration-phase operations requiring $5-15 billion investment with limited profitability (due to $1,500-$2,500/kg launch costs and immature supply chains) toward $1.78 billion by 2029 and $39 billion by 2035. This trajectory assumes successful launch cost reduction below $200/kg via SpaceX Starship operational cadence, component maturation including flight-qualified radiation-hardened GPUs and advanced composite radiators reducing mass by 20-30%, and autonomous repair capabilities enabling 7-10 year satellite lifespans without major interventions.

Strategic Implications for Investment and Development Positioning

The analysis supports evaluation of commercial platform partnerships and technology positioning decisions during the 2025-2030 orbital computing transition period. Understanding the differentiated maturity across subsystems—solar power generation at TRL 7-8 building on decades of spacecraft heritage versus autonomous thermal management at TRL 3-5 requiring breakthrough validation—could inform investment allocation and development prioritization as demonstration-phase ventures require substantial capital deployment before achieving profitability.

The validation that NVIDIA H100 GPUs can execute AI training in orbit despite radiation exposure, demonstrated through Starcloud-1's successful November 2025 mission, establishes technical feasibility for satellite edge processing and AI inference applications while illuminating scaling constraints. Radiator mass penalties of 5-10 kg/m² fundamentally limit achievable compute density per launch, creating economic pressure to maximize computational efficiency (operations per watt) and minimize waste heat through architectural optimizations.

Organizations evaluating orbital infrastructure opportunities may assess application-specific viability distinctions. Satellite-sourced data processing eliminates hours-scale ground-relay latency and demonstrates immediate commercial potential for Earth observation analytics, communications payload processing, and autonomous satellite operations. General-purpose cloud migration faces bandwidth limitations requiring careful workload characterization: inference-dominated applications prove viable, while training-intensive workloads require hybrid architectures maintaining terrestrial hyperscale capacity.

The convergence of validated kilowatt-scale hardware demonstrations, optical inter-satellite networking achieving 100 Gbps per link across 9,000+ Starlink satellites transferring 42 petabytes daily, and launch cost trajectories where SpaceX Starship's 25-launch 2025 cadence precedes projected order-of-magnitude reductions suggests potential inflection points in commercial scalability. However, these opportunities remain contingent upon closing critical gaps in autonomous servicing (enabling component replacement without crewed missions), terabit-scale mesh networking (maintaining 99.9% link availability across constellation handovers), and multi-year radiation reliability validation (confirming 7-10 year operational lifespans under continuous high-utilization workloads).

The strategic window for early positioning aligns with ISS retirement timelines approaching 2030, commercial platform deployment schedules including Axiom's ODC network expansion and Google's 2027 prototype launches, and the 2026-2030 period when launch economics and technology maturation trajectories may converge to enable operational TRL 8-9 systems. The $480 billion commercial space economy's computing infrastructure requirements—spanning satellite constellation operations, Earth observation analytics, communications processing, and emerging lunar/cislunar missions—create sustained demand for orbital edge computing capabilities independent of general-purpose cloud migration timelines.

For investors, the differentiation criterion centers on flight-validated hardware demonstrating proprietary radiation shielding and thermal architectures rather than simulation-based feasibility claims. Ventures advancing from conceptual designs to operational demonstrations—as Starcloud achieved with H100 GPU training and Axiom accomplished with free-flying ODC node launches—signal engineering maturity and reduced technical risk compared to early-stage concepts relying on unproven extrapolations.

Research Scope and Analytical Framework

This white paper synthesizes technical validation data from operational orbital computing demonstrations spanning February 2021 through late 2025, including HPE's Spaceborne Computer-2 ISS operations, Starcloud's November 2025 H100 GPU satellite launch, Axiom Space's Orbital Data Center node deployments, China's Three-Body Constellation initial cluster (12 satellites, May 2025), and Google's announced Project Suncatcher prototypes targeting 2027.

Research evaluated peer-reviewed literature on space-qualified photovoltaic systems achieving 95-99% capacity factors through sun-synchronous orbits, radiation-hardened electronics testing documented in NASA's 2025 NSREC Data Workshop Compendium examining COTS component performance under Van Allen belt and cosmic ray exposures, thermal management physics governed by Stefan-Boltzmann principles imposing fundamental trade-offs between radiator temperature and surface area requirements, and optical inter-satellite link performance benchmarks from SpaceX Starlink operations demonstrating 100 Gbps per link across 9,000+ laser-equipped satellites transferring 42 petabytes daily.

Analysis incorporated corporate technical disclosures and white papers detailing proprietary radiation shielding approaches, immersion cooling architectures, and autonomous thermal control systems. NASA Technology Readiness Level assessments establish current maturation at TRL 6-7 for demonstrated prototypes operating in relevant environments, with advancement to TRL 8-9 operational systems requiring validation of autonomous servicing, scalable thermal management, and multi-year radiation reliability. Market projections from industry analysts forecast sector growth from current demonstration-phase operations to $1.78 billion by 2029 and $39 billion by 2035, contingent upon launch cost reductions and technology breakthroughs.

Launch cost trajectory modeling examines SpaceX Starship development cadence, with 25-launch 2025 targets serving as precursor to projected order-of-magnitude reductions enabling sub-$200/kg economics by mid-2030s. A phased maturation framework maps progression from 2025-2027 proof-of-concept demonstrations requiring $5-15 billion investment, through 2028-2030 transition contingent on launch cost achievements and component maturation, 2031-2036 operational deployment of 10-100 MW facilities, toward 2036-2050 gigawatt-scale infrastructure requiring autonomous assembly and in-orbit manufacturing capabilities.

The analytical framework delineates technical validation achievements from remaining gaps, establishing that current demonstrations validate core subsystems—radiation-tolerant high-performance computing, passive thermal management, optical networking, edge AI inference—while gigawatt-scale visions remain at TRL 3-5 requiring fundamental advances in autonomous construction, in-situ resource utilization, and ultra-long-duration reliability prediction extending substantially beyond current demonstrated capabilities."